Monitoring and Gathering Metrics from Kubernetes Audit Logs

Log files, streams and messages provide lots of information about what's going on at runtime. Since Kubernetes 1.7, we've been able to see what's going on inside of our cluster with Kubernetes audit logs.

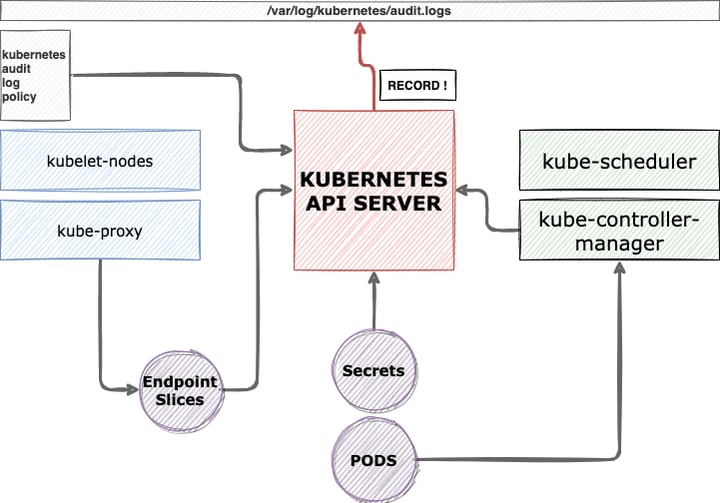

According to the audit-log policy files, Kubernetes keeps and tracks the activity on the entire cluster. Each action is routed and executed by KubernetesAPIServer, so it basically works as a hub. This architectural design allows us to track from a central place.

If you take a look at the audit logs from a security perspective, you can capture API Server activities as well as non-permitted actions and detect who is trying to access application and cluster resources.

Besides the security perspective, you can also detect slow API Server calls, etcd performance issues, etc.

How to?

Configure

In the self-managed Kubernetes cluster, you have to configure some of the parameters based on your setup.

The parameters that you have to add into your kube-apiserver.yaml file are:

“””

--audit-policy-file=”<AUDIT_POLICY_FILE_PATH>”

--audit-log-path=”<WHERE_TO_WRITE_LOGS>

“””

--audit-policy-file : Contains the path of your audit policy file, which we develop.

--audit-log-path: Path of the log files.

You can add retention values for audit-log-group files according to your instance types.

For more details: https://kubernetes.io/docs/tasks/debug-application-cluster/audit/

Audit Policy files

Audit policy files are, by definition, contracts of requested events from API Server. Let's check the example policy yaml file.

In the example policy yaml file, we request to record GET, LIST and WATCH verbs under the endpoint of core(api/v1), apps and certificates.k8s.io.

In summary, you can track all the specified events resources under the apps, core and certificates.k8s.io .

“””

- level: Request

verbs: ["get", "list", "watch"]

resources:

- group: "" # core

- group: "apps"

- group: "certificates.k8s.io"

“””

So far, we have focused on configuring the audit logs. Now you can tail the logs from the file you've passed to this parameter. --audit-log-path.

And now, we can dig into the alerting mechanism from the audit logs.

How to ship audit logs?

This section will show an example solution with Promtail and Loki.

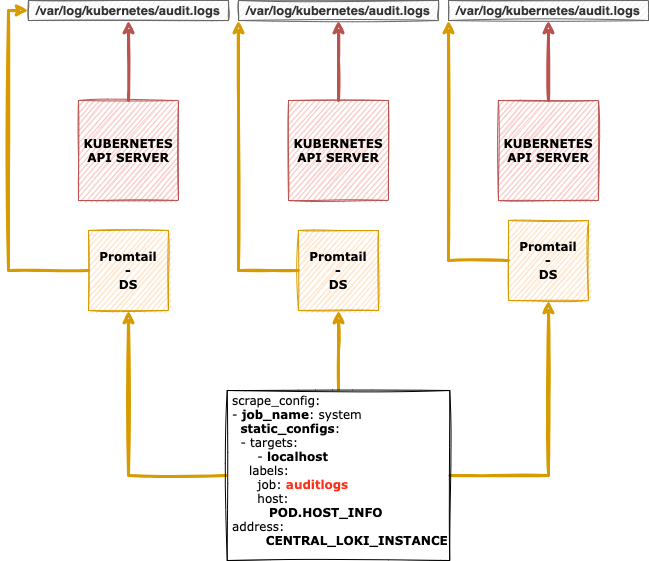

Promtail is a log shipper that can tail logs from files, STDOUT .. etc. The Grafana community developed this log shipper, working very well with Loki.

Promtail has a simple configuration file, so we only need to set the path of the Kubernetes-audit logs.

You can find more details about Promtail from this link: https://grafana.com/docs/loki/latest/clients/promtail/

What about Loki? Loki is useful and easy to set up a centralised log server. The Grafana Community developed Loki, and it uses Prometheus-based log labelling. Using Loki can increase your query performance.

You can set up Loki in a VM or Kubernetes:

Helm Installation

https://grafana.com/docs/loki/latest/installation/helm/

Docker

https://grafana.com/docs/loki/latest/installation/docker/

Notice: You can use ElasticSearch, Splunk, etc., to store your log messages according to your infrastructure circumstances.

After promtail installation is complete for the Loki, let's deploy our log-shippers into the place where we want to ship our Kubernetes audit logs.

Finally, you need to achieve the following topology: Promtail and Loki. The Loki instance count might change according to the master instance count on your cluster.

Next Step?

We have completed the log-shipper part and started to send log messages into a centralised log address. In this blog post, we have decided to use Loki as a centralised log instance, but according to your circumstances, you can configure your log-shippers to send logs into the ElasticSearch, Humio or Splunk.

To integrate promtail with Loki instance, you have to adjust your promtail configuration file like this.

server:

....

..

..

clients:

- url: http://LOKI_HOST_ADDR:3100/loki/api/v1/push

Notice: After you download the promtail you will have only a single binary. To create your configuration file, you can check this link: https://grafana.com/docs/loki/latest/clients/promtail/configuration/

After setting Loki address into the promtail, it should start to ingest audit logs into your Loki instance.

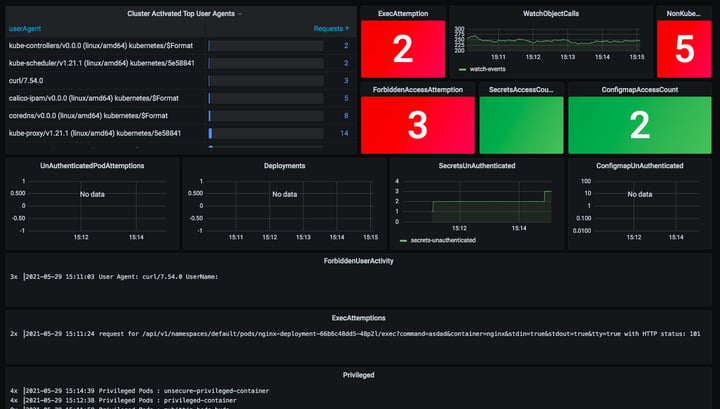

In this stack, we use Loki as a Grafana data source to create dashboards and alerts according to the log outputs.

You can check example dashboards here: https://github.com/WoodProgrammer/kubernetes-audit-dashboards

Audit dashboard view on Grafana

In Public Cloud

Managed Kubernetes services like EKS, AKS or GCP provide support by routing cluster audit logs into centralised logging services (like AWS CloudWatch) easily.

This provider ability allows us to analyse the audit logs with zero configuration on the control plane level. If you want to query the logs and create alerts based on them when there is a suspicious event, you can look at Soprano's proof of concept tool.

Soprano runs the queries on specified log-groups and generates Prometheus metrics over the exporter endpoint. That way, you can easily monitor your EKS cluster over Prometheus and Grafana.

Conclusion

To analyse and gather valuable information from the API Server, Kubernetes presents many capabilities for us; in this case, we can use ebpf based tracing tools, audit logs, etc. But you have to care about the sameAPI server as you did for the worker nodes.

Kubernetes also announced a new feature called (still in the alpha state) APIServer tracing in version 1.22 https://kubernetes.io/blog/2021/09/03/api-server-tracing/

We get new tools to track and trace our clusters every single day!

Log messages are the first step to gaining visibility for what is going on inside the cluster. Let's start enabling audit logs for your clusters today! Don't hesitate to get in touch if you need help.